The Probability Pivot: A New Product Manager’s Guide to Building Products That Actually Think

PM DEPOT

tl;dr: AI in product management isn’t a new fad; we’ve been trying to automate ourselves since 1986. The real shift for modern PMs is moving from "deterministic" software (If X, then Y) to "probabilistic" software (If X, then probably Y). Understanding this "maybe" is the key to building intelligent products that actually solve human problems instead of just following rigid rules.

The Ghost of Product Management Past: Why 1986 is Calling and It Wants Its Code Back

Title: The Probability Pivot: A New Product Manager’s Guide to Building Products That Actually Think Card Description: Stop building rigid checklists and start building intelligence. This deep dive explores why the future of product management lies in the "maybe" and how to bridge the gap between old-school logic and the new era of probabilistic AI.

Let’s start with a little game. I want you to read a quote and guess when it was written.

"Applying AI to the software development process is a major research topic. There is tremendous potential for improving the productivity of the programmer, the quality of the resulting code, and the ability to maintain and enhance applications. We are working on intelligent programming environments that help users assess the impact of potential modifications, determine which scenarios could have caused a particular bug, systematically test an application, coordinate development among teams of programmers. Other significant software engineering applications include automatic programming, syntax-directed editors, and automatic program testing."

If you asked a modern LLM about this, it would tell you it sounds like a press release from a hot San Francisco startup in 2024 or 2025. It hits all the right notes: productivity, quality, automated testing, and "intelligent programming environments." It sounds like the pitch for Cursor or GitHub Copilot.

The reality is a bit more humbling. That quote is from an article published in March of 1986. Yes, the year Top Gun came out and everyone was wearing neon leg warmers.

I’m not bringing this up to show off my vintage resume or to suggest that I’m a time traveler. I’m sharing it because it highlights a hilarious and slightly painful truth in tech: we have been trying to solve the same problems for forty years. We were, quite frankly, clueless back then about how hard these problems actually were. We had the vision, but we didn’t have the math. More importantly, we didn’t have the right philosophy.

For a new product manager entering the field today, the "AI" label is everywhere. It’s the garnish on every pitch deck. But to actually build something that isn't just a wrapper for a chatbot, you have to understand the fundamental shift from the "if/then" world to the "probably" world.

The Expert System Trap and the Stanford Doctor

Back in the eighties, our big bet was something called "Expert Systems." The logic was simple: find a human expert, suck their brain dry of every rule they use to make decisions, and code those rules into a computer. We thought that if we could just write enough "If/Then" statements, we could recreate human intelligence.

As a young engineer, I got to sit in on an interview with a physician at Stanford. The "Knowledge Systems Engineer" was trying to map out how this doctor monitored patients. The engineer kept asking for rules. "If the heart rate is X and the blood pressure is Y, do you do Z?"

The doctor looked exhausted. He eventually explained that while there are rules you learn in med school, his actual job was making "educated guesses based on probabilities." He would look at a patient, sense a pattern, form a hypothesis (a guess), and then run tests to see if he was right.

This was a lightbulb moment. We were trying to build a rigid ladder of logic, but the doctor was playing a game of poker with biology. He was dealing in odds, not certainties.

In hindsight, it is easy to see why those early attempts failed. You cannot code enough rules to cover the infinite messiness of the real world. This is what researchers call the "Knowledge Acquisition Bottleneck." It turns out that humans are actually quite bad at explaining why they are good at what they do. We operate on intuition and probability, which are two things that traditional code hates.

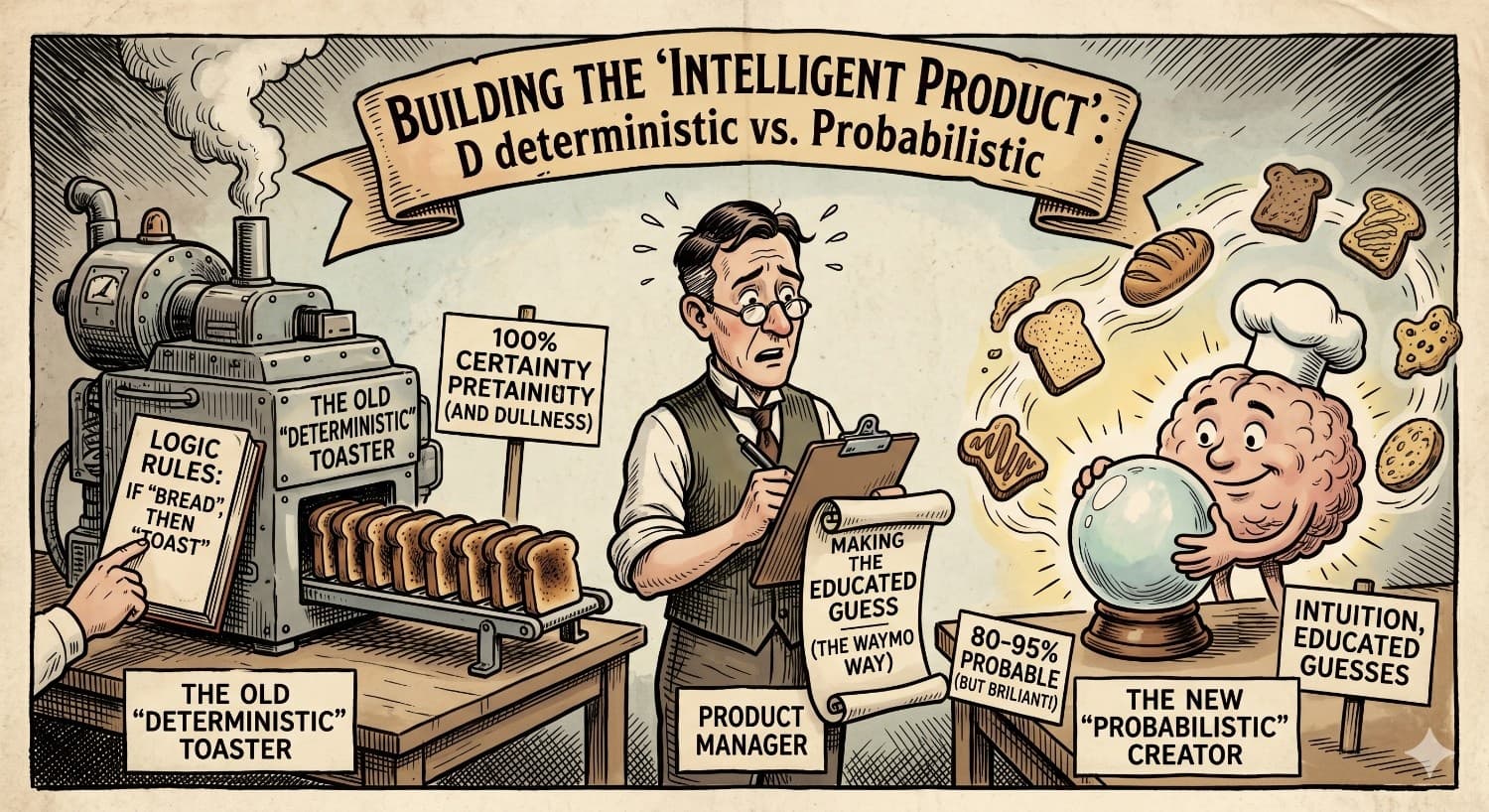

The Great Divorce: Deterministic vs. Probabilistic

If you want to survive as a PM in the next decade, you need to tattoo these two words on your brain: Deterministic and Probabilistic.

A deterministic system is a toaster. You push the lever down, the heating element turns on, and four minutes later, you have toast. If the toaster decides to "hallucinate" and turns your bread into a grilled cheese sandwich, the toaster is broken. Deterministic software is predictable. If you click "Save," the file should save. Every single time.

A probabilistic system is a human chef. You ask the chef to make the "best possible" toast. Most of the time, it’s great. Sometimes, the sourdough is a bit sourer than usual. Sometimes, the crust is a little charred because the chef was trying a new technique. The chef isn't "broken" when the result varies; the variation is actually a side effect of the chef’s intelligence.

For the last forty years, PMs have been taught to build toasters. We write PRDs (Product Requirement Documents) that demand 100 percent certainty. "The system shall do X when Y happens."

But "intelligent products" are different. They are a blend. They take the reliability of the toaster and mix it with the intuition of the chef. The problem is that many PMs, especially in the B2B or regulated space, are terrified of the "chef" part. They say, "Our customers need compliance! They need 100 percent accuracy! We can’t have a system that 'guesses'!"

Here is the niche perspective you won't hear in a standard MBA class: Your customers are already using probabilistic systems every day. They are called "employees."

When a bank manager decides whether to approve a loan, they are using a probabilistic model in their head. When a lawyer predicts how a judge might rule, that’s a probability. If we want our products to be "intelligent," we have to stop demanding that they be toasters and start helping them become better "guessers."

The Waymo Lesson: 50 Million Miles of "Maybe"

If you want to see the most impressive intelligent product on the planet right now, don't look at a chatbot. Look at a Waymo.

An autonomous car is the ultimate probabilistic challenge. A deterministic car would be a train on tracks. It knows exactly where it is going and what is in front of it. But a car on a city street in San Francisco? It has to deal with a cyclist who might swerve, a pedestrian who might be looking at their phone, and a rogue pigeon with a death wish.

Waymo doesn't have a rule for every possible scenario. There is no line of code that says "If a toddler in a dinosaur suit runs into the street while holding a balloon, turn left 12 degrees." Instead, the car predicts the most likely path of everything around it. It is constantly asking, "What is the probability that this cyclist will turn left?"

The genius of the Waymo product isn't just the driving; it’s the aggregate learning. Every one of the 1,500 cars in the fleet shares its "guesses" with the others. If one car learns that a specific intersection is tricky because of the way the sun hits the sensors at 4:00 PM, every car learns it. They are currently doing over 20 million miles of simulated driving per day.

For a PM, the takeaway here is "Continuous Learning." In the old world, you shipped a feature and you were done. In the intelligent product world, shipping is just the start of the data collection phase. Your product should be smarter on Tuesday than it was on Monday.

The Subtle AI: Spotify and the Art of the Vibe

Not every intelligent product needs to drive a car. Some of the best AI is so subtle you don't even realize it’s there.

Take Spotify’s Discover Weekly. This is a purely probabilistic product. It is guessing what you might like based on what people with similar tastes like. If Spotify was deterministic, it would only play songs by artists you have already liked. That would be a boring product.

Instead, Spotify takes a risk. It plays something weird. If you skip it, the "intelligence" notes that the guess was wrong. If you save it, the "intelligence" gets a hit of dopamine (mathematically speaking) and refines its model.

As a PM, you should ask: "Where can my product afford to be wrong in exchange for being brilliant?"

If you are building a medical billing app, you probably shouldn't be "risky" with the tax calculations. That part should be deterministic. But maybe the way you surface which claims need urgent attention should be probabilistic. Maybe you can predict which claims are most likely to be rejected based on historical patterns. That is where the value is.

The New Tools: Shopify Magic, Cursor, and the Death of the Blank Page

We are seeing a new wave of "Generative" intelligent products that are changing the way we work. Shopify Magic helps merchants write product descriptions. Cursor helps engineers write code.

The popular perspective is that these tools are "writing" for us. The niche perspective is that these tools are actually "brainstorming partners" that handle the low-level cognitive load.

When you use Cursor to write code, you aren't abdicating your job as an engineer. You are acting as a reviewer. You are shifting from "How do I write this syntax?" to "Is this logic correct?"

This is a massive shift for Product Managers. Your job is no longer just defining the "What." You now have to define the "How we evaluate the What."

If your product generates text, voice, or images, you need to build "evals." You need to create systems to measure whether the output is actually good. This is much harder than traditional QA (Quality Assurance). You can't just check if a button works; you have to check if the "vibe" of the generated content matches the brand.

Practical Advice for the Newer PM

If you are just starting out, don't get distracted by the hype cycles. Don't worry about whether AGI (Artificial General Intelligence) is coming in two years or twenty. That is a distraction for researchers and philosophers.

Your job is to build products that help people today. Here is how you do that:

First, stop thinking of AI as a "feature." You don't "add AI" to a product any more than you "add electricity" to a toaster. It is a fundamental building block. Ask yourself: "If this product could make an educated guess about what the user wants next, what would that guess be?"

Second, embrace the blend. Every great product is a mix. The user interface should be deterministic (the back button should always go back). The core value proposition should increasingly be probabilistic (the search results should be personalized, the recommendations should be smart, the automation should be intuitive).

Third, focus on the data loop. An intelligent product is only as good as the data it learns from. If you don't have a way to capture user feedback (even implicit feedback like a "skip" or a "delete"), your AI will never get smarter. It will just be a static rule-book dressed up in fancy marketing.

Finally, be human. The irony of the AI era is that human skills—empathy, storytelling, and judgment—are more important than ever. Machines are great at probabilities, but they are terrible at "Why." They don't know why a user is frustrated. They don't know why a specific business goal matters.

The doctors at Stanford knew that med school rules were only half the battle. The other half was looking the patient in the eye and understanding the context. As a PM, you are the "Knowledge Engineer" of the modern era. Your job isn't just to write the rules; it’s to understand the soul of the problem you are trying to solve.

We’ve been trying to build these "intelligent programming environments" since 1986. We finally have the tools to do it. Just remember that the goal isn't to build a perfect machine. The goal is to build a product that is smart enough to help a human be a little more brilliant.

Put It Into Practice

How would you handle it in the room?

Step into the simulator — test your PM instincts against real stakeholder pressure. No slides. No safety net.

Enter the Simulator